Simply having a website is no longer sufficient to thrive in the rapidly evolving digital landscape of today. To truly distinguish oneself, a robust user experience (UX) and effective implementation of search engine optimization (SEO) are essential components. Improving your website’s SEO can enhance your online visibility, whereas having a superior user experience can lead to an increase in leads.

Throughout my 15+ years of career in Digital Marketing, I have consistently collaborated with clients and developers to create websites that are both user and SEO-friendly. However, many clients tend to build the entire website without involving the SEO team, which can be a challenging process for all the parties involved when the website goes live: the Client (who must pay for requested revisions), the Developer (who must redo the work), and the SEO (who must review entire website, request changes and wait for changes to be made).

Considering the need for time and the SEO consulting experience I have in my hand, I am writing this post aimed at assisting web developers in constructing an SEO-friendly website from the outset, which can be a win-win situation for all stakeholders.

I am excited to share with you this comprehensive list of factors that need to be considered while developing a website.

This post will take less than 15 minutes to read. So, sit back, relax, read, and understand these important factors which are sure to expand your knowledge and understanding of effective & SEO-friendly website development.

Responsive Website at a Single URL Serving to All Devices

In today’s digital age, having a website is essential for businesses & individuals who want to establish an online presence. However, simply having a website for desktops is not enough. It must be designed to be responsive, meaning the layout should be compatible with any screen size, whether it’s a desktop computer, tablet, or smartphone.

Many developers would design different layout on different URL that serves a specific device or resolution, but that was adding lots of additional work at everyone’s end. The client will have to pay more & manage a greater number of pages/layouts, Developer will have to develop lots of different layouts, and SEO will have to optimize each page separately.

This is where having a single URL that serves all devices & resolutions comes into play. In this section, we will discuss the importance of having a responsive website with a single URL serving all devices, and how you can check if your website is mobile-friendly.

Why should a website be responsive with a single URL serving all devices?

Having a responsive website with a single URL serving all devices has numerous benefits, including:

- Improved User Experience: A responsive website ensures that users have a consistent experience across all devices. The content is easy to read and navigate, and the website’s functionality is not compromised, regardless of the device being used.

- Better Search Engine Ranking: Google prioritizes mobile-friendly websites when ranking in search results. Therefore, having a responsive website with a single URL serving all devices can significantly improve your website’s search engine ranking.

How to Check if Your Website is Mobile-Friendly?

It is simple, thanks to Google’s Mobile-Friendly Test.

Here are the steps to follow:

- Go to https://search.google.com/test/mobile-friendly

- Enter your website URL in the provided field and click on the “Test URL” button.

- Wait for the test results, which will show if your website is mobile-friendly or not.

Website Loading Speed

In today’s fast-paced digital world, a website’s loading speed is a critical factor in the success of any online marketing campaign. With the rise of mobile devices and the increased importance of search engine optimization, a website’s speed score has become a vital factor in determining its success.

Google’s Speed Test Tool is an essential resource that can help you evaluate and understand what is causing issues with website speed (if any).

Why is Website Speed Score Important?

Website speed score is a critical factor that impacts several key aspects of a website’s success, including:

- User Experience: A fast-loading website ensures that users have a positive experience and remain engaged with the site. Slow loading times can lead to frustration and high bounce rates, which negatively impact the overall user experience.

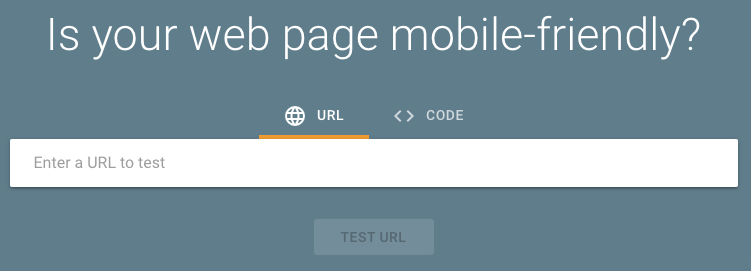

- Search Engine Ranking: Google considers website speed as one of the ranking factors in its search algorithms. Google has also coined a new concept of Core Web Vital which considers web page’s speed metrics that are now part of Google’s Page Experience signals. The website with the fastest loading speed is more likely to rank higher in search engine results than a slow website.

- Conversion Rates: Website speed plays a crucial role in influencing the conversion rates of a website. A faster website leads to higher conversions and, as a result, increases revenue.

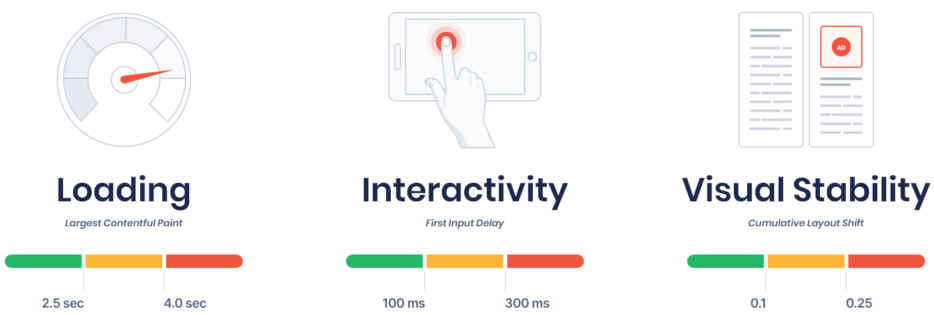

How to Check Website Speed Score?

Google Speed Test Tool is a free tool that measures website speed for both desktop and mobile devices. Here are the steps to follow:

- Go to https://developers.google.com/speed/pagespeed/insights/.

- Enter your website URL and click on “Analyze.”

- Wait for the tool to analyze your website and generate the speed score.

How to Improve Website Speed Score?

Web pages loading speed can be quickly improved by implementing the following strategies:

- Image Optimization: Optimizing images can significantly improve website speed. Using image compression tools to reduce the size of images without sacrificing quality is a great way to improve website speed. Alternatively, design your image in WebP or SVG format.

- Minimize HTTP Requests: Minimizing the number of HTTP requests can significantly improve website speed. Combining CSS and JavaScript files, reducing the number of images, and using browser caching are all effective ways to minimize HTTP requests.

- Compressing CSS & JS: Minifying CSS and JS could help you load them faster, and that helps you speed up website loading.

- Page Caching: Caching speeds up content delivery by retrieving content more quickly, resulting in a reduction in latency during the roundtrip time. The time it takes to retrieve the content from the cache is typically shorter than the time it takes to retrieve it from the origin server, making the website speed more efficient.

- Use a Content Delivery Network (CDN): A CDN is a network of servers that help deliver content to users faster by storing a copy of your website on multiple servers across the globe. This reduces the distance content has to travel, resulting in faster loading times.

Inline CSS & JS

CSS & JS files should be called inline only. No CSS or JavaScript should be mentioned in physical html page source code (unless it’s mandatory).

To ensure that your website performs at its best, it’s crucial to optimize its performance, and one effective method to achieve this is by calling CSS and JS files inline. This approach can offer various advantages that can significantly enhance your website’s speed, performance, and maintainability.

Website should have SSL Certificate

In today’s digital age, having a website with an SSL certificate configured is a necessity. An SSL certificate ensures that the data exchanged between the user’s web browser and the website’s server is encrypted, which makes it nearly impossible for hackers to intercept and access sensitive information. This level of security not only helps to protect the website and its visitors from potential cyber-attacks, but it also fosters trust and credibility among users.

Additionally, search engines also favor websites that have an SSL certificate, which can result in improved search engine rankings and increased visibility for the website. Therefore, it’s crucial for any website owner to ensure that their website is secured with an SSL certificate.

SEO & User-Friendly URLs

Including the page name in the URL is an important best practice from an SEO and usability point of view. And by using a dash as a separator in the URL, makes it easier for users & search engines to read and understand the URL structure.

For example, a URL like www.domain.com/file-name.php is more informative and user & search engine friendly than a URL like www.domain.com/6789.php, which doesn’t provide any meaningful information.

Additionally, search engines can use the page name as a signal to understand the content of the page and rank it accordingly. Overall, having a clear and descriptive URL structure can enhance the user experience and improve the website’s visibility and search engine rankings, but don’t make it lengthy.

Consistent URL Structure is Essential

URL rewriting is an important aspect of website design and development. It refers to the practice of modifying the URL of a webpage to make it more user-friendly and descriptive. The goal is to create a consistent URL structure that is easy to read, understand, and remember. This can be achieved by removing unnecessary characters, adding relevant keywords, and using a hierarchical structure that reflects the content of the page.

If you are redesigning a website that it is important to make sure that the URL structure doesn’t change in a new website, and in case if it is mandatory to change then do not forget to give 301 redirects of the old URL to the new one.

To maintain consistency in the URL structure, it is important to avoid using capital or special character. They can cause confusion, and make it harder for users to remember or type in the URL correctly. It is best to stick to lowercase letters for all URLs on the website.

Another important aspect of URL consistency is the use of extensions. Many developers use extensions such as “.html”, “.aspx”, “.php”, but it is recommended to have no extension at all (just a trailing slash). This is important for several reasons. First, it helps to maintain a consistent look and feel across the website. Second, it will not change when you upgrade the website using another technology. Finally, it can help prevent broken links and errors that can occur when URLs change.

Check Search Engine Crawlability

Google and other search engine crawlers are very smart nowadays but there still very few things that they cannot crawl, so do check with SEO team if there are any doubts about the crawlability of specific sections where you used complex JS, Flash, etc.

No Index, No Follow During Development Phase

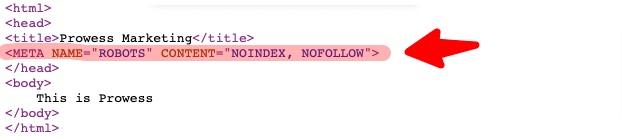

It is very important to add Noindex, Nofollow Meta Tag (as shown in screenshot below) on all pages of the website when it is under development or on development server.

This tag advises search engine bots to not index pages and follow the links present on the page, which can prevent premature indexing of unfinished or error-prone pages.

However, it’s essential to remove this tag once the website goes live, so search engines can crawl and index the website properly.

Avoid Black Hat SEO Technique

Using black hat SEO techniques, such as hidden text, hidden links, cloaking, and meta refresh tags, can result in a website being penalized or banned from search engine results pages. These techniques violate search engine guidelines and can get you penalized or ban (removed from Search Engine database).

Many developers might use this by mistake but this can have major impact on your website’s online success. So, to achieve long-term success, it is best to focus on ethical and legitimate SEO strategies.

URL Redirects

When redirecting a page, it’s generally best to use a 301 redirect unless the redirect is only temporary.

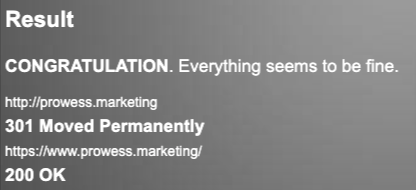

Permanent redirects should always be a 301 redirect. This type of redirect informs search engines that the original URL has permanently moved to a new location. This ensures that link equity and ranking signals are transferred to the new URL, which helps to maintain search engine visibility and preserve user experience. Temporary redirects should use a 302 redirect instead.

When redesigning a website, it is crucial to maintain the URL structure of the existing site in the new version. However, if changes to the URLs are necessary, it is essential to provide a 301 redirect from the old URLs to their corresponding new ones.

Keep the Code Clean and Compact

“Lesser the coding, betters the crawling” is a search engine optimization principle that emphasizes the importance of keeping website code clean, neat, and compact. This makes it easier for search engine crawlers to index and understand the website’s content.

Additionally, clean and compact code can improve web page size.

Include a Robots.txt File by Default

Robots.txt is a file that is used to communicate with search engine crawlers and web robots to specify which pages or directories of a website should or shouldn’t be indexed.

The default setting for the Robots.txt file should allow all web robots to crawl and index the website.

The default configuration for the Robots.txt file should be as follows:

User-agent: *

Allow: /

The “*” symbol in the User-agent field means that the settings apply to all web robots. The “Allow: /” directive specifies that all pages and directories on the website can be crawled and indexed by search engine bots.

This default setting ensures that the website can be easily discovered by search engines and its pages can be indexed, which is essential for the website’s visibility and search engine optimization. However, for specific use cases, webmasters may need to modify the Robots.txt file to restrict access to certain pages or directories.

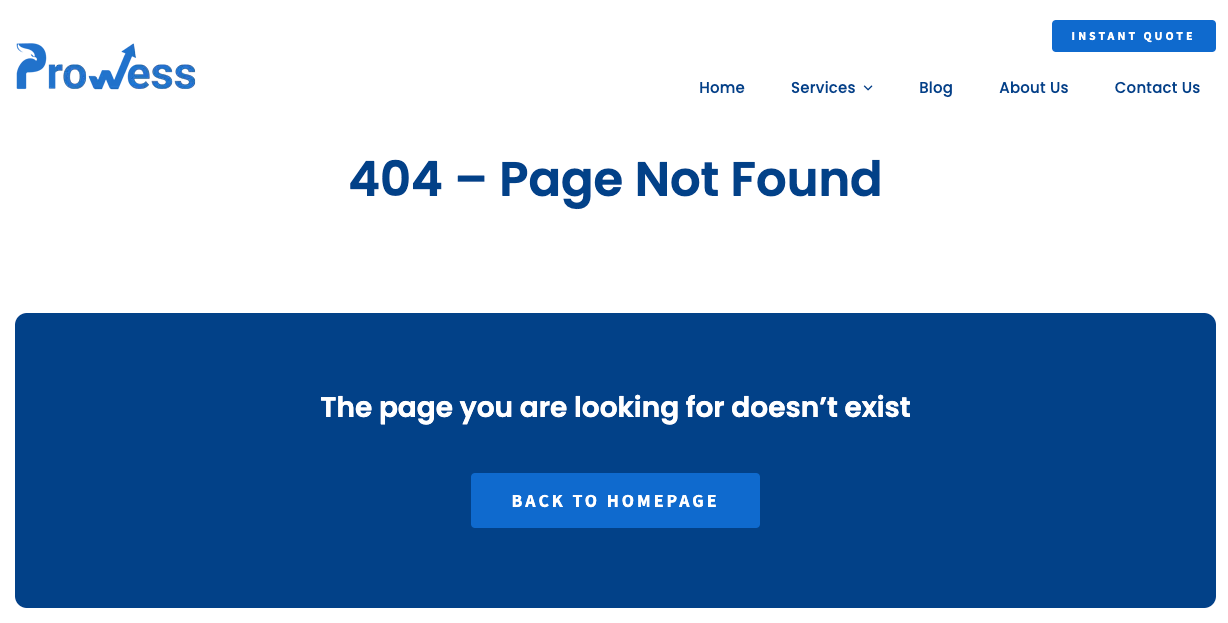

Custom 404 page

If you want to provide your website visitors with a better user experience, having a custom 404 page is essential. When a page is not found, a custom 404 page serves as a backup plan and informs users that the page they are trying to access does not exist and they can navigate to other alternate pages. This helps to prevent visitors from leaving your website due to frustration or confusion.

Some more tips for optimizing your website for search engines:

- Title Tag: Make sure each page has a unique name in the title tag. For example, your title tag should look like this: < title > Page Name < /title >.

- Meta Description: Include a brief snippet of your page’s content in the meta description tag. For example: < meta name=”description” content=”Page Description” />.

- Header Tags: Add only one H1 tag, two H2 tags, and a few H3 tags which are unique for each page For example: < h1 > Page Name < /h1 >. Avoid using header tags in common sections like header, sidebar, and footer.

- HTML Sitemap: Make sure the website has an HTML sitemap, which can be generated dynamically or created as a static page.

- XML Sitemap: Adding an XML sitemap will help search engine locate and crawl all the web pages on your website.

- Canonical Issues: Ensure that the non-www version of your website redirects to the www version OR vice-versa using a 301 redirect. For example, http://domain.com/ should redirect to http://www.domain.com/. Also, make sure that the root page (index.php, index.html, or index.aspx) redirects to the root slash. i.e. http://domain.com/index.php should redirect to http://www.domain.com/.

- Broken Links: Finally, avoid having any broken links on your website. This may seem obvious, but it’s important to double-check that all your links are working properly.

Conclusion

In conclusion, if the developer will follow the best practices given in this post then they will be able to launch SEO and a User-Friendly website, and there will be very less back and forth between the Client and the SEO Team.

Here are some key takeaways:

- Strive for perfection in your initial website creation to avoid costly rework in the future.

(It’s always better to do it right the first time, even if it takes a little longer, because reworking and fixing mistakes will cost you more time and effort in the end.)

- You will be able to launch a technically SEO-friendly website that can give you some initial boost.

- You will be able to set yourself apart from the classic developers who concentrate only on coding output, and not on SEO & User-Friendliness of the website.

By following these key practices and staying up-to-date with industry developments, you can help your clients launch a website that is aligned with their goals. So, take the opportunity to unleash the power of SEO in your web development strategy and build a reputation as a skilled and reliable developer. Good luck!